Project summary

| Project no.: PN-III-P2-2.1-PED-2019-0352; Contract no.: 276PED/2020 | |

| Principal Investigator: Radu-Daniel Vatavu | |

| Funded by UEFISCDI, Romania | |

| Funding scheme: PNIII P2 - Increasing economic competitiveness through research, development and innovation (PED) | |

| Running period: August 2020 - August 2022 (24 months) |

Abstract

The goal of this project is to develop new input technology to increase the accessibility of smart wearables, such as glasses, watches, and rings, for people with upper-body motor impairments. To this end, we propose the concept of motor-streamlined input for wearables, which subsumes gesture input (in the form of touch, stroke-gestures, mid-air input, or head movements) and voice input according to a design, implementation, and evaluation paradigm centered on the specific motor abilities of the end user.

Figure 1. In contrast to smartphones with relatively large input surfaces for touch input (rightmost image),

wearable devices, such as smartglasses, smart rings, and smartwatches (left and middle images),

present specific interaction challenges for people with motor impairments.

Objectives

We are developing a TRL-4 experimental model for motor-streamlined input with smart wearables. Our concrete objectives are:- Examine interactions performed by people with upper-body motor impairments with smart glasses, watches, and rings.

- Design and implement techniques (TRL-3) for effective input on smart glasses, watches, and rings.

- Integrate and validate input techniques in the form of an experimental model (TRL-4).

Expected results

The main result of this project will be an experimental model (TRL-4) to enable motor-streamlined use of smart wearables for users with upper-body motor impairments. Associated results are:- Method to analyze gesture and voice input produced by people with motor impairments using wearable devices.

- Recognition results for gestures performed using smart glasses, watches, and rings.

- Experimental results and dataset of gestures performed using smart glasses, watches, and rings.

- Scientific publications in high-quality conferences and journals and yearly research reports.

Team

-

Prof. Radu-Daniel Vatavu, Principal Investigator

Prof. Radu-Daniel Vatavu, Principal Investigator

-

Dr. Ovidiu-Andrei Schipor

Dr. Ovidiu-Andrei Schipor

-

Dr. Ovidiu-Ciprian Ungurean

Dr. Ovidiu-Ciprian Ungurean

-

Alexandru-Ionuț Șiean (PhD candidate in Computer Science)

Alexandru-Ionuț Șiean (PhD candidate in Computer Science)

-

Laura-Bianca Bilius (PhD candidate in Computer Science)

Laura-Bianca Bilius (PhD candidate in Computer Science)

Publications

|

|

|

|

|

|

|

|

|

|

|

|

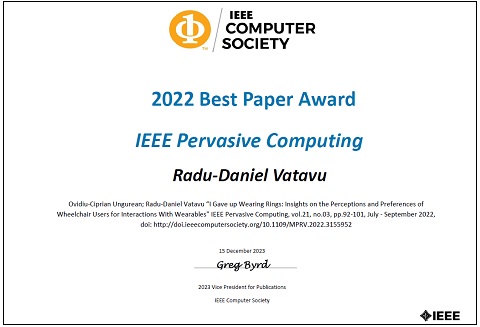

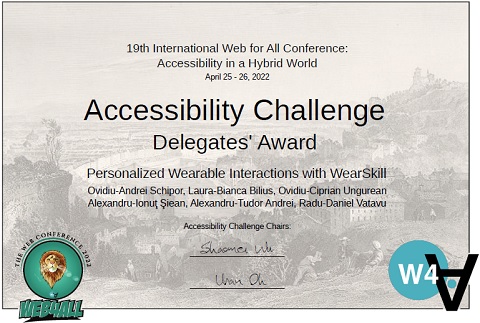

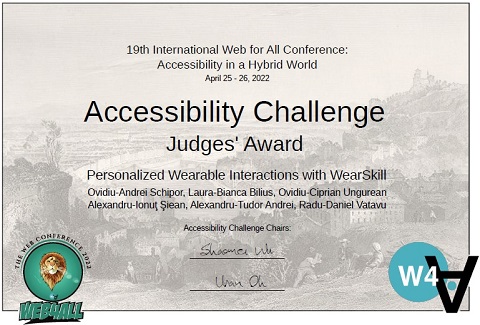

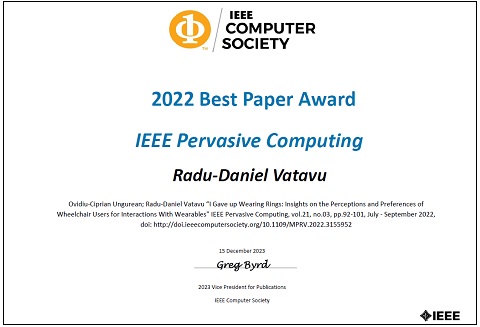

Awards

Media

Project reports (in Romanian)

- Scientific report 2020-2022 PDF

- Scientific report 2022 PDF

- Scientific report 2021 PDF

- Scientific report 2020 PDF

Other resources (software, diagrams, and datasets)

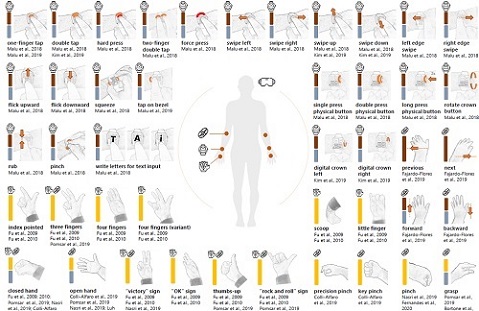

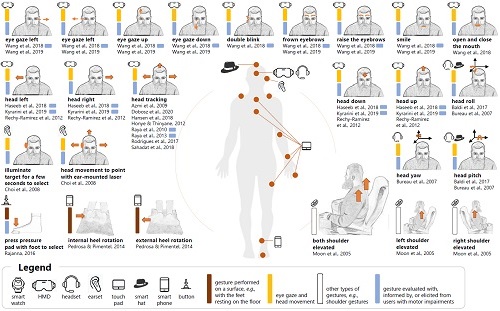

-

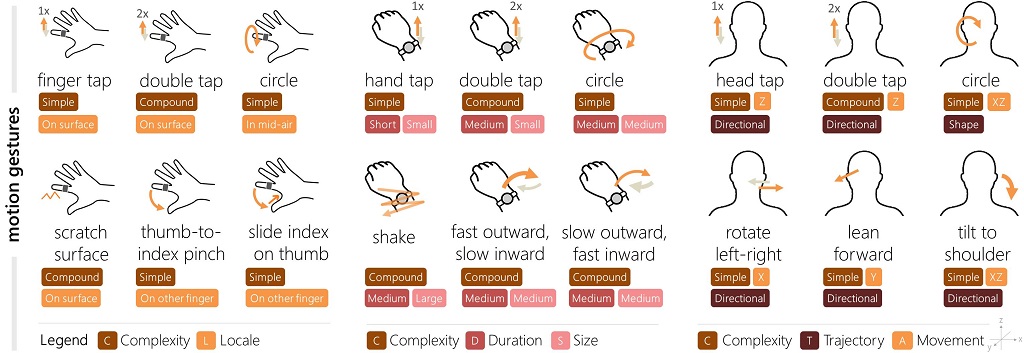

Hand gesture set for wearable interactions (high-resolution PDF )

and gestures performed using the head and feet (high-resolution PDF ).

These gestures represent a compilation of 92 possible interactions with smartglasses, head-mounted displays (HMDs), smartwatches,

earsets, headsets, on-body-located touch pads, smart armbands, and foot-mounted devices that were extracted, classified, and analyzed

during a Systematic Literature Review of the scientific literature on accessible wearable interactions.

Artwork copyright © 2021 Alexandru-Ionut Siean and Radu-Daniel Vatavu.

-

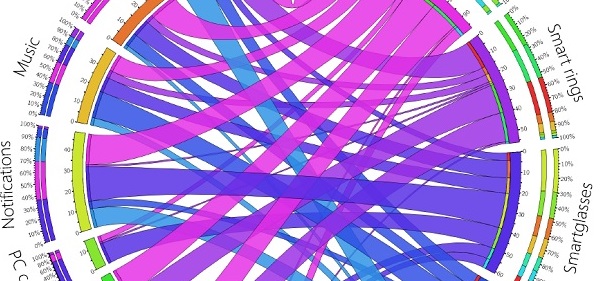

Association map highlighting preferences of users with motor impairments for applications of smart wearables (high-resolution PDF ).

-

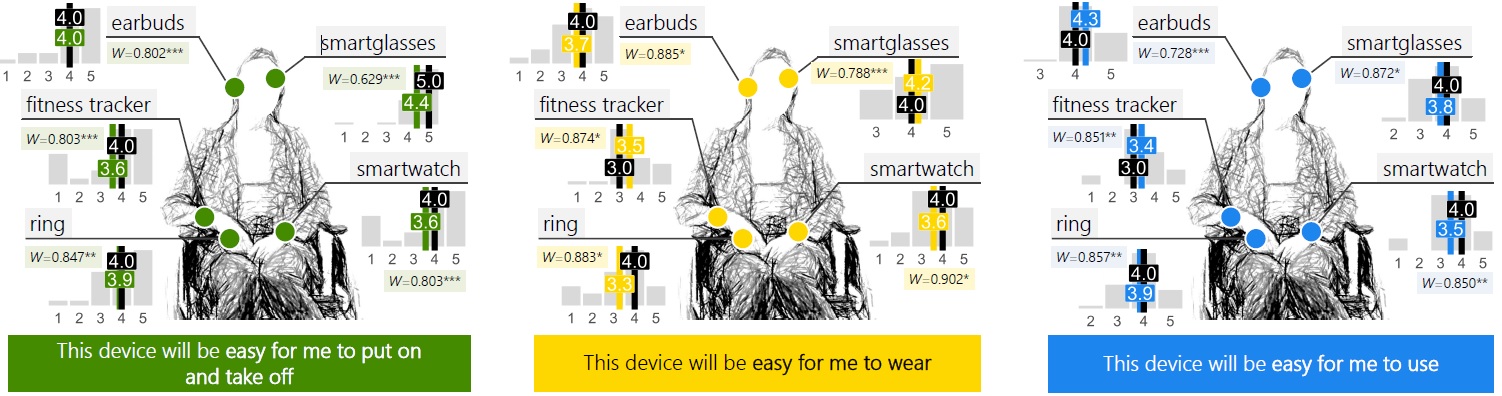

An overview of the perceptions of 21 people with motor impairments, wheelchair users, regarding smart wearable devices

(high-resolution PDF )

and a dataset of 2,625 records

about perceptions of fitness trackers, smartwatches, smartglasses, earbuds, and rings.

- Software applications demonstrating TRL-3 level implementation for various input modalities for smart wearables: (1) motion gesture input on the Samsung Galaxy Watch 3 RAR , (2) touch gesture input on a smart ring implemented with the Gear Fit 2 device RAR , and (3) voice input on the Vuzix Blade smartglasses RAR . Software is released under this License .

- GearWheels software application (node.js, HTML/JavaScript) for conducting data collection experiments with wearable devices RAR | License

-

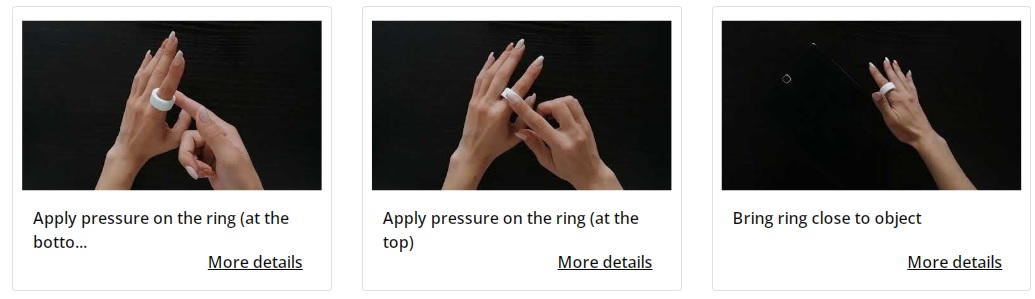

GestuRING web application for ring gestures: link .

GestuRING is a tool meant to assist HCI researchers and practitioners interested in designing gesture input for ring, ring-like, and ring-ready devices. GestuRING features a large amount of information that is readily available to inform design decisions about which ring gestures to effect which function in an interactive system and to inspire new gesture designs by building on a body of scientific knowledge that is systematically structured.

-

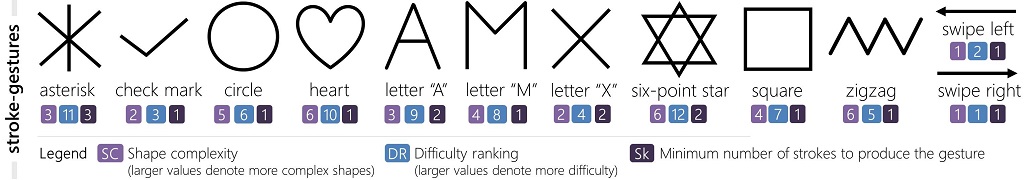

Two datasets are available here

regarding gesture input with smartwatches, glasses, and rings.

The first dataset contains 7,290 stroke-gesture samples collected from 28 participants,

of which 14 participants with upper-body motor impairments.

The second one contains 3,809 motion-gesture samples collected from the same participants.

-

WearSkill software application (node.js, HTML/JavaScript) for personalized interactions with wearable devices and users

with motor impairments.

RAR | License